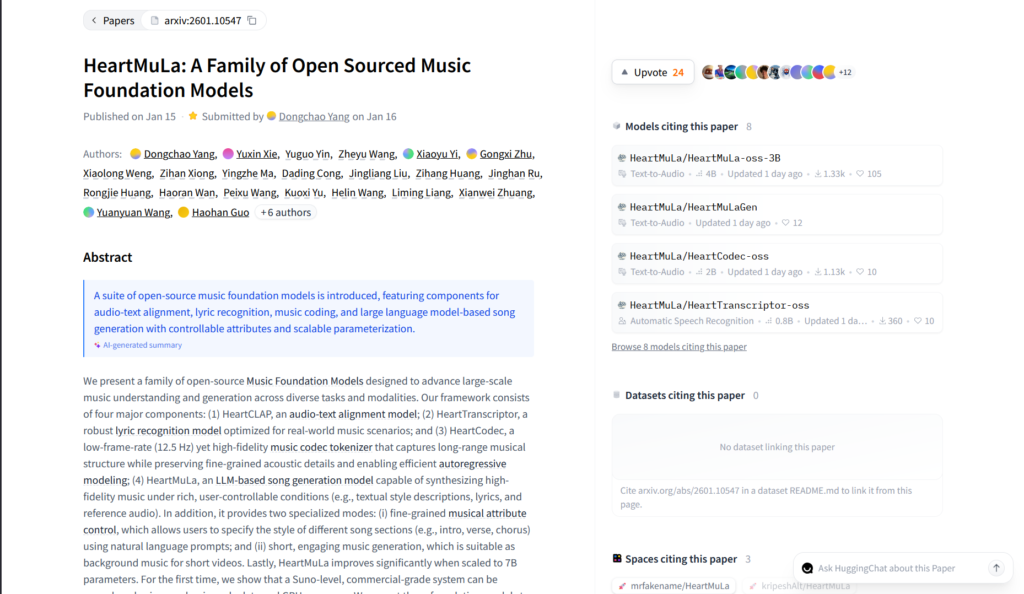

What is HeartMuLa?

HeartMuLa is an open-source family of music foundation models for generating high-quality songs from lyrics and style tags, supporting multiple languages and controllable sections.

Is HeartMuLa free to use?

Yes, it’s completely free and open-source under Apache 2.0 with model weights, code, and local inference available on GitHub and Hugging Face.

When was HeartMuLa released?

The initial open-source release (HeartMuLa-oss-3B) was on January 14-15, 2026, with updates like RL-refined versions in late January.

What languages does HeartMuLa support?

It generates music with lyrics in English, Chinese, Japanese, Korean, Spanish, and potentially more, with strong multilingual conditioning.

How do I run HeartMuLa locally?

Clone the heartlib repo, install via pip, download weights from Hugging Face, and run examples/run_music_generation.py with lyrics and tags.

Does HeartMuLa have a web interface?

No official hosted UI, but community tools like HeartMuLa-Studio and ComfyUI nodes provide graphical interfaces for easier use.

How does HeartMuLa compare to Suno?

It offers similar quality in many cases but with open-source freedom, no limits, offline use, and multilingual strengths, though Suno has easier UI.

What hardware is required for HeartMuLa?

A good GPU (8GB+ VRAM recommended) for smooth inference; supports multi-GPU and lazy loading to optimize memory.

HeartMuLa

About This AI

HeartMuLa is a family of open-source music foundation models released in January 2026, designed for high-quality music generation and understanding tasks.

The flagship HeartMuLa-oss-3B is a music language model that generates studio-quality songs conditioned on lyrics and tags (style, mood, genre), supporting multilingual lyrics in English, Chinese, Japanese, Korean, Spanish, and more.

It uses a cascaded decoding architecture with global and local transformers for coherent long-form music, a 12.5 Hz high-fidelity codec (HeartCodec) for efficient tokenization, a fine-tuned Whisper-based lyrics transcriber (HeartTranscriptor), and an audio-text alignment model (HeartCLAP) for retrieval.

Key strengths include section-level style control (intro, verse, chorus), reference audio conditioning in advanced versions, and competitive quality against commercial tools like Suno while being fully open-source under Apache 2.0.

Inference runs locally with multi-GPU support, lazy loading for memory efficiency, and classifier-free guidance for better control.

Community integrations include ComfyUI nodes, HeartMuLa-Studio UI, and rapid adoption (2.7k GitHub stars shortly after release).

Available via GitHub repo with pretrained weights on Hugging Face/ModelScope, it enables developers, musicians, and creators to generate unlimited music offline without licensing restrictions.

Future plans include 7B scaling, streaming inference, and enhanced fine-grained control, making it a leading open alternative in AI music synthesis.

Key Features

- Multilingual lyrics-to-music generation: Creates songs in English, Chinese, Japanese, Korean, Spanish and more from text lyrics and tags

- Section-level style control: Specify different styles/moods for intro, verse, chorus, etc. via prompts

- High-fidelity audio codec: HeartCodec at 12.5 Hz with excellent reconstruction for long-range structure

- Lyrics transcription: HeartTranscriptor (Whisper-tuned) extracts accurate lyrics from audio

- Audio-text alignment: HeartCLAP for cross-modal retrieval and similarity tasks

- Classifier-free guidance: Adjustable CFG scale for controlled generation quality

- Multi-GPU and lazy loading: Optimizes VRAM usage for larger models and inference

- Local offline deployment: Full inference without internet or API keys

- Community UIs and nodes: ComfyUI integration, HeartMuLa-Studio for browser-like experience

Price Plans

- Free ($0): Full open-source access to models, code, weights, and inference under Apache 2.0; unlimited local generations with no fees

- Cloud/Hosted (Custom): Potential future paid hosted options via community or third-parties (not official yet)

Pros

- Completely open-source: Apache 2.0 with weights, code, and no usage limits or costs

- Multilingual excellence: Strong support for non-English lyrics and global music styles

- Controllable generation: Section styles, tags, and CFG for tailored outputs

- High audio quality: Competitive with commercial tools like Suno in fidelity

- Community momentum: Rapid integrations (ComfyUI nodes, studios) and 2.7k stars

- Offline unlimited use: Ideal for creators wanting privacy and no quotas

- Active development: RL-refined versions and 7B scaling planned

Cons

- Requires strong GPU: 3B model needs good VRAM (8GB+ recommended) for smooth inference

- Setup technical: Local install, dependencies, and model download needed

- No hosted demo for all: Official demo limited; full power is local-only

- Early-stage scaling: 3B is current; 7B not yet released

- Generation speed: RTF around 1.0; longer songs take time

- Occasional inconsistencies: Complex prompts may need prompt engineering

- No mobile/web native: Primarily for desktop/local use

Use Cases

- Music creation from lyrics: Turn written songs/poems into full tracks with style control

- Multilingual song generation: Produce music in Chinese, Japanese, Korean, etc. for global creators

- Background music for videos: Generate short engaging clips with specific moods

- Prototyping and ideation: Quickly test musical ideas offline without subscriptions

- Research and fine-tuning: Extend models for custom genres or voices

- ComfyUI workflows: Integrate into visual AI pipelines for multimedia projects

- Personal music projects: Unlimited experimentation for hobbyists and indie artists

Target Audience

- AI music enthusiasts and creators: Wanting Suno-like quality open-source and offline

- Multilingual songwriters: Working in non-English languages for authentic generation

- Indie musicians and producers: Prototyping tracks without commercial limits

- ComfyUI and Stable Diffusion users: Extending visual workflows to audio

- AI researchers in audio: Experimenting with music foundation models

- Content creators needing BGM: For videos, games, or social media

How To Use

- Clone repo: git clone https://github.com/HeartMuLa/heartlib.git and cd heartlib

- Install: pip install -e . (use python 3.10 recommended)

- Download models: Get weights from Hugging Face (HeartMuLa/HeartMuLa-oss-3B etc.)

- Run generation: python examples/run_music_generation.py --model_path ./ckpt --version 3B

- Provide inputs: Lyrics in .txt file and tags (e.g. piano,happy,romantic)

- Customize: Use --cfg_scale for guidance, --temperature for variety

- Output: Generated .mp3 saved; explore ComfyUI nodes for GUI

How we rated HeartMuLa

- Performance: 4.5/5

- Accuracy: 4.6/5

- Features: 4.7/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.2/5

- Customization: 4.8/5

- Data Privacy: 5.0/5

- Support: 4.3/5

- Integration: 4.5/5

- Overall Score: 4.6/5

HeartMuLa integration with other tools

- ComfyUI: Custom nodes for seamless integration into visual AI workflows (HeartMuLa_ComfyUI repo)

- Hugging Face: Model weights and spaces for testing/inference pipelines

- GitHub: Full source code, examples, and community contributions

- Local Audio Tools: Outputs MP3/WAV for use in DAWs like Ableton, Logic, or Audacity

- Third-Party UIs: HeartMuLa-Studio and community frontends for browser-like experience

Best prompts optimised for HeartMuLa

- A heartfelt acoustic ballad about lost love in English, gentle piano and soft vocals, emotional verse-chorus structure, romantic melancholy mood

- Upbeat K-pop dance track in Korean, synth-heavy with catchy chorus, energetic female vocals, summer party vibe

- Traditional Chinese guzheng instrumental with modern electronic fusion, serene and meditative, flowing melody

- J-pop anime opening song in Japanese, fast-paced rock with powerful male vocals, heroic adventure theme

- Latin pop reggaeton beat in Spanish, rhythmic percussion and sensual lyrics, party club atmosphere

FAQs

Newly Added Tools

About Author