What is JavisGPT?

JavisGPT is the first unified multimodal large language model for joint audio-video comprehension and synchronized sounding-video generation, featuring a SyncFusion module for spatio-temporal fusion.

When was JavisGPT released?

The model and code were released on December 26, 2025, with the paper published on December 28, 2025 (NeurIPS 2025 Spotlight).

Is JavisGPT free to use?

Yes, it is fully open-source with model weights (preview v0.1-7B-Instruct), code, and dataset available on Hugging Face and GitHub under a permissive license.

What does JavisGPT do?

It understands audiovisual inputs temporally and generates sounding videos (video + aligned audio) from multimodal instructions, excelling in synchronized tasks.

How can I try JavisGPT?

Download from Hugging Face (JavisVerse/JavisGPT-v0.1-7B-Instruct), follow GitHub README for setup and inference scripts; requires GPU for practical use.

Who developed JavisGPT?

Developed by a team including Kai Liu, Hao Fei, Tat-Seng Chua, and others under the JavisVerse project (academic/research collaboration).

What hardware is needed for JavisGPT?

Inference and generation require significant GPU resources (e.g., high-end NVIDIA GPUs); it’s a 7B+ multimodal model, so CPU-only is impractical.

Is there a demo for JavisGPT?

Check the project page (javisverse.github.io/JavisGPT-page/) for possible demos; otherwise, run locally via provided code or look for community HF Spaces.

JavisGPT

About This AI

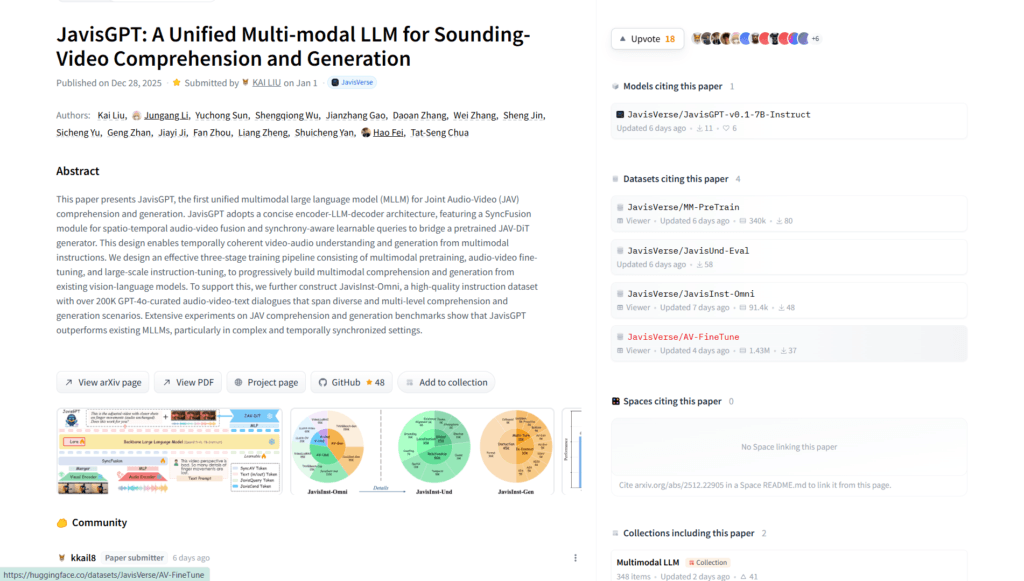

JavisGPT is the first unified multimodal large language model (MLLM) specifically designed for joint audio-video (JAV) comprehension and generation.

It enables temporally coherent understanding of audiovisual inputs and simultaneous generation of synchronized sounding videos from multimodal instructions.

The model features a concise encoder-LLM-decoder architecture with the innovative SyncFusion module for spatio-temporal audio-video fusion and synchrony-aware learnable queries that bridge a pretrained JAV-DiT generator.

Trained via a three-stage pipeline: multimodal pretraining, audio-video fine-tuning, and large-scale instruction-tuning on the JavisInst-Omni dataset (over 200K GPT-4o-curated audio-video-text dialogues).

It excels in complex, temporally synchronized tasks, outperforming existing MLLMs on JAV comprehension and generation benchmarks, including synchronized audio-video QA and high-quality text-to-audio-video generation.

Released as an open-source project in late December 2025 (NeurIPS 2025 Spotlight), with code, preview checkpoint (JavisGPT-v0.1-7B-Instruct), and dataset available on Hugging Face and GitHub.

Ideal for researchers, developers, and creators working on audiovisual AI, video understanding, sounding video synthesis, multimodal agents, and applications requiring aligned audio-visual intelligence.

As a research-oriented model, it requires technical setup (e.g., via GitHub code) and is not a ready-to-use consumer app but supports inference and fine-tuning for advanced users.

Key Features

- Joint Audio-Video Comprehension: Understands synchronized audiovisual inputs with temporal coherence

- Sounding-Video Generation: Generates videos with aligned audio from text or multimodal prompts

- SyncFusion Module: Spatio-temporal fusion for effective audio-video alignment

- Synchrony-Aware Queries: Learnable queries bridging LLM to JAV-DiT generator

- Multimodal Instruction Following: Handles complex instructions involving audio, video, and text

- Temporally Coherent Outputs: Ensures synchronization across time for realistic sounding videos

- Three-Stage Training: Multimodal pretraining, AV fine-tuning, large-scale instruction-tuning

- JavisInst-Omni Dataset: 200K+ GPT-4o-curated dialogues for diverse JAV tasks

- Benchmark Leadership: Superior performance on synchronized AV QA and generation tasks

- Open-Source Release: Code, model checkpoint, dataset on Hugging Face and GitHub

Price Plans

- Free ($0): Fully open-source model weights, code, and dataset under permissive license; no usage fees

- Local/Cloud Compute (Variable): Costs depend on hardware or cloud GPU rental for running inference/generation

Pros

- Pioneering unified JAV model: First to jointly handle comprehension and generation in one framework

- Strong temporal synchronization: Excels at aligned audio-video outputs and understanding

- Open-source accessibility: Full code, weights (preview 7B-Instruct), and dataset freely available

- Research-grade performance: Outperforms prior MLLMs on specialized JAV benchmarks

- Innovative architecture: SyncFusion and synchrony-aware queries enable high-quality fusion

- Extensive dataset: JavisInst-Omni provides rich, curated multimodal instruction data

- NeurIPS Spotlight recognition: Accepted as high-impact work in top AI conference

Cons

- Research-focused: Requires technical setup (no simple web app; needs code execution)

- Preview stage: v0.1 release means potential instability or limited capabilities

- Compute-intensive: Large multimodal model demands significant GPU resources for inference/generation

- No consumer interface: Lacks polished UI; users must run locally or via HF Spaces if available

- Limited public stats: New release with no widespread user numbers or broad adoption yet

- Specialized scope: Focused on sounding-video tasks; less general-purpose than text LLMs

- Setup complexity: Requires following GitHub instructions for installation and use

Use Cases

- Sounding-video generation: Create videos with synchronized audio from text or multimodal prompts

- Audiovisual understanding: Analyze and answer questions about videos with sound

- Multimodal research: Experiment with joint audio-video models and fine-tuning

- Video captioning/QA: Generate descriptions or answers for sounding videos

- Creative AI applications: Build tools for synchronized media synthesis

- Academic benchmarking: Test and compare JAV comprehension/generation performance

- Agent development: Integrate into multimodal agents handling audio-visual inputs

Target Audience

- AI researchers: Studying multimodal LLMs, audio-video fusion, and generation

- Developers and engineers: Building audiovisual AI applications or prototypes

- Multimodal AI enthusiasts: Experimenting with open-source JAV models

- Academic institutions: Using for papers, theses, or course projects in AI

- Creative technologists: Exploring sounding-video creation techniques

- Computer vision/speech communities: Interested in unified audio-video models

How To Use

- Visit project: Go to https://github.com/JavisVerse/JavisGPT or Hugging Face page

- Install dependencies: Follow README for environment setup (PyTorch, transformers, etc.)

- Download model: Pull JavisGPT-v0.1-7B-Instruct checkpoint from Hugging Face

- Run inference: Use provided scripts for comprehension or generation tasks

- Input multimodal data: Provide video/audio/text prompts as per examples

- Generate outputs: Run model to produce answers or sounding videos

- Explore demos: Check project page for any online demos or HF Spaces if available

How we rated JavisGPT

- Performance: 4.7/5

- Accuracy: 4.6/5

- Features: 4.8/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 3.8/5

- Customization: 4.5/5

- Data Privacy: 4.9/5

- Support: 4.2/5

- Integration: 4.4/5

- Overall Score: 4.6/5

JavisGPT integration with other tools

- Hugging Face Ecosystem: Model hosted on HF for easy download, inference via transformers library

- GitHub Codebase: Full implementation scripts for local setup, fine-tuning, and evaluation

- PyTorch/Transformers: Built on standard deep learning frameworks for seamless extension

- JAV-DiT Generator: Integrated pretrained diffusion transformer for video-audio synthesis

- Research Toolchains: Compatible with multimodal evaluation suites and benchmark frameworks

Best prompts optimised for JavisGPT

- Generate a sounding video of a serene mountain lake at dawn with birds chirping, gentle waves, and soft wind sounds, cinematic style, 8 seconds

- Analyze this video clip: describe the scene, actions, dialogue, and emotional tone while synchronizing audio events with visuals

- Create a short sounding video from this text: A chef preparing fresh sushi in a bustling Tokyo kitchen, chopping sounds, sizzling, upbeat Japanese music

- Answer detailed questions about the audio-visual content in this video: What is the person saying? What background noises are present? How do they relate to the actions?

- Generate synchronized audio-video: A futuristic robot dancing in a neon city street at night, electronic music beat, footsteps echoing

FAQs

Newly Added Tools

About Author