What is JavisGPT?

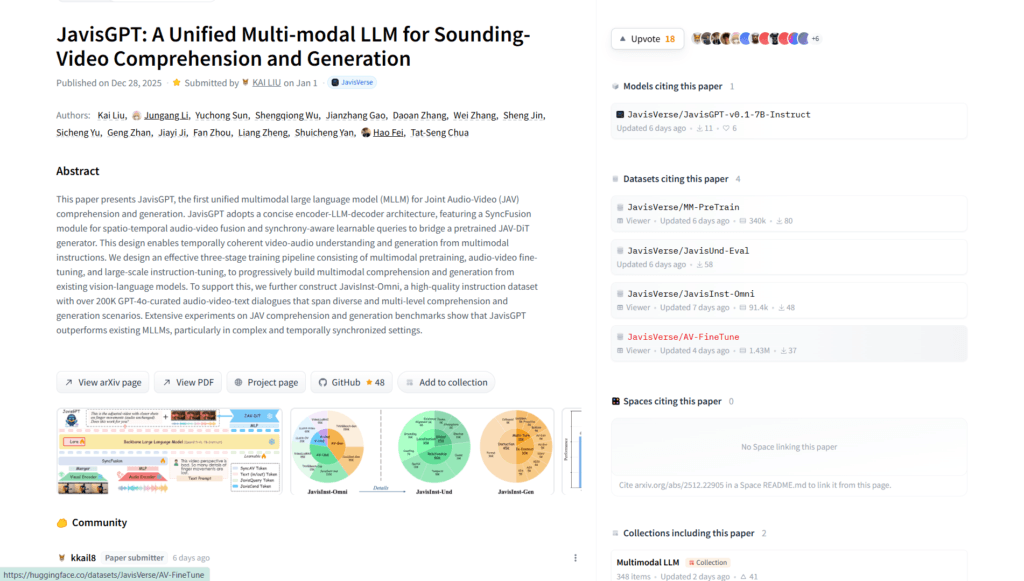

JavisGPT is the first unified multimodal large language model for joint audio-video comprehension and synchronized sounding-video generation, featuring a SyncFusion module for spatio-temporal fusion.

When was JavisGPT released?

The model and code were released on December 26, 2025, with the paper published on December 28, 2025 (NeurIPS 2025 Spotlight).

Is JavisGPT free to use?

Yes, it is fully open-source with model weights (preview v0.1-7B-Instruct), code, and dataset available on Hugging Face and GitHub under a permissive license.

What does JavisGPT do?

It understands audiovisual inputs temporally and generates sounding videos (video + aligned audio) from multimodal instructions, excelling in synchronized tasks.

How can I try JavisGPT?

Download from Hugging Face (JavisVerse/JavisGPT-v0.1-7B-Instruct), follow GitHub README for setup and inference scripts; requires GPU for practical use.

Who developed JavisGPT?

Developed by a team including Kai Liu, Hao Fei, Tat-Seng Chua, and others under the JavisVerse project (academic/research collaboration).

What hardware is needed for JavisGPT?

Inference and generation require significant GPU resources (e.g., high-end NVIDIA GPUs); it’s a 7B+ multimodal model, so CPU-only is impractical.

Is there a demo for JavisGPT?

Check the project page (javisverse.github.io/JavisGPT-page/) for possible demos; otherwise, run locally via provided code or look for community HF Spaces.