What is Qwen-Audio?

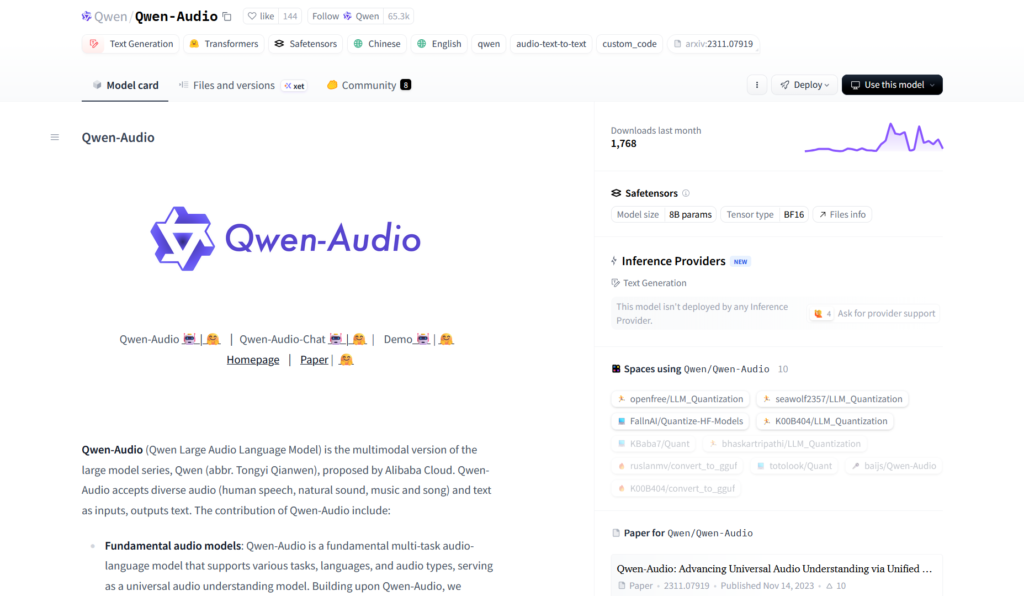

Qwen-Audio (Qwen2-Audio series) is Alibaba’s open-source large audio-language model for processing diverse audio inputs (speech, sounds, music) and generating text outputs like transcription, analysis, translation, and voice chat responses.

When was Qwen2-Audio released?

Qwen2-Audio was released on August 9, 2024, as the next version of Qwen-Audio with improved capabilities.

Is Qwen-Audio free to use?

Yes, Qwen2-Audio-7B and Instruct variants are fully open-weights under Apache 2.0 on Hugging Face and ModelScope with no usage fees for local or self-hosted deployment.

What languages does Qwen-Audio support?

Strong multilingual support for speech recognition, translation, and analysis across many languages (exact count not specified but excels on global benchmarks like Fleurs and CoVoST2).

Can Qwen-Audio handle non-speech audio?

Yes, it processes music, environmental sounds, mixed audio, and performs sound event classification, emotion detection from vocals, and more.

How do I run Qwen-Audio locally?

Install latest transformers from GitHub source, load model from ‘Qwen/Qwen2-Audio-7B-Instruct’, prepare audio/text inputs with processor, and generate outputs.

What benchmarks does Qwen-Audio excel on?

It outperforms SOTA on AIR-Benchmark for audio instruction-following, LibriSpeech ASR, Common Voice, Fleurs translation, Meld emotion, Vocalsound classification, and more.

Does Qwen-Audio support voice chat?

Yes, users can speak instructions directly for natural conversational interaction, with multi-turn support.

Qwen-Audio

About This AI

Qwen-Audio (including Qwen2-Audio series) is Alibaba’s open-source large audio-language model family capable of processing diverse audio inputs and generating text outputs.

The latest Qwen2-Audio (released August 2024) supports speech recognition, translation, audio analysis, emotion detection, sound classification, and direct voice chat instructions.

It handles various audio signals: speech in multiple languages, non-speech sounds (music, environmental noises), mixed audio, and combines audio with text prompts for complex understanding.

Key strengths include high performance on benchmarks like LibriSpeech, Common Voice, Fleurs, Aishell2, CoVoST2, Meld, Vocalsound, and AIR-Benchmark, often surpassing previous SOTAs including Whisper and Gemini-1.5-Pro in audio-centric instruction-following.

The model enables voice chat (users speak instructions), multi-turn conversations, and tasks like ASR, speech emotion recognition, audio captioning, and sound event detection.

Open-weighted versions include Qwen2-Audio-7B (pretrained) and Qwen2-Audio-7B-Instruct (chat-tuned), available on Hugging Face and ModelScope with Apache 2.0 license.

It integrates with Hugging Face Transformers for easy inference, supports various audio formats, and is suitable for developers building voice assistants, transcription tools, audio analyzers, and multimodal applications.

With strong multilingual support and robust handling of noisy/complex audio, Qwen-Audio advances universal audio understanding and fosters open multimodal AI community development.

Key Features

- Universal audio understanding: Processes speech, music, environmental sounds, mixed audio, and non-speech events

- Voice chat mode: Users can speak instructions directly for natural conversational interaction

- Multimodal input: Accepts audio plus text prompts for combined analysis and reasoning

- High-accuracy ASR: Strong performance on multilingual speech recognition benchmarks

- Speech emotion recognition: Detects emotions from vocal audio inputs

- Sound event classification: Identifies and classifies non-speech sounds like music or environmental noises

- Audio captioning: Generates descriptive text for audio content

- Speech translation: Translates spoken content across languages

- Multi-turn conversation: Supports ongoing dialogue with audio/text inputs

- Open-source integration: Fully supported in Hugging Face Transformers with easy inference scripts

Price Plans

- Free ($0): Full open-source access to model weights, code, and inference tools on Hugging Face/ModelScope under Apache 2.0; no usage fees

- Cloud API (Paid via DashScope): Optional Alibaba Cloud hosted inference with pay-per-use or subscription pricing

Pros

- Top-tier benchmark performance: Outperforms or matches SOTA models like Whisper and Gemini-1.5-Pro on audio tasks

- Fully open-weights: 7B models available free on Hugging Face/ModelScope under Apache 2.0

- Versatile audio handling: Excels at both speech and non-speech audio understanding

- Voice interaction innovation: Enables natural spoken instructions and chat without typing

- Multilingual strength: Robust support for diverse languages in ASR and translation

- Easy deployment: Hugging Face Transformers integration for quick local/cloud use

- Community-driven: Open-source encourages extensions, fine-tuning, and applications

Cons

- Requires GPU for best speed: 7B model inference benefits from high-end hardware

- Setup for local run: Needs transformers library and model download; not plug-and-play web app

- Audio input limits: May have constraints on very long or extremely noisy files

- No native mobile/edge: Primarily for server/developer use; on-device not optimized

- Recent release: Community tools/fine-tunes still emerging compared to older models

- Potential VRAM needs: Full precision inference requires sufficient GPU memory

- Focus on analysis: Stronger on understanding than pure TTS generation (paired with Qwen TTS variants)

Use Cases

- Voice assistants: Build conversational agents that understand spoken queries and audio context

- Meeting transcription: Accurate multilingual ASR with emotion and key event detection

- Audio content analysis: Classify sounds, caption clips, or detect events in videos/podcasts

- Speech translation tools: Real-time or batch translation of spoken content

- Emotion-aware apps: Customer service bots detecting user sentiment from voice

- Research and development: Fine-tune for custom audio tasks or multimodal systems

- Accessibility features: Live captioning or audio description for media

Target Audience

- AI developers and researchers: Building voice/multimodal applications or experimenting with audio LLMs

- App builders: Integrating speech understanding into products like assistants or analyzers

- Content creators: Analyzing podcasts, videos, or music for insights/transcripts

- Enterprises: Customer support, call centers, or media monitoring needing audio intelligence

- Open-source enthusiasts: Fine-tuning or contributing to audio-language models

- Educators and students: Learning about audio AI or building prototypes

How To Use

- Install transformers: pip install git+https://github.com/huggingface/transformers.git for latest support

- Load model: from transformers import Qwen2AudioForConditionalGeneration, AutoProcessor; model = Qwen2AudioForConditionalGeneration.from_pretrained('Qwen/Qwen2-Audio-7B-Instruct')

- Prepare input: Use processor to handle audio file or microphone input + text prompt

- Generate output: model.generate(inputs, max_new_tokens=...) for text response or analysis

- Voice chat mode: Stream audio input and get real-time spoken/text responses

- Run locally: Use GPU for faster inference; CPU possible but slower

- Try demo: Access Hugging Face or ModelScope spaces for no-code online testing

How we rated Qwen-Audio

- Performance: 4.8/5

- Accuracy: 4.7/5

- Features: 4.6/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.4/5

- Customization: 4.8/5

- Data Privacy: 5.0/5

- Support: 4.5/5

- Integration: 4.7/5

- Overall Score: 4.7/5

Qwen-Audio integration with other tools

- Hugging Face Transformers: Official support for loading, inference, and fine-tuning

- ModelScope: Alibaba's platform for model hosting, demos, and additional tools

- Local Hardware: Runs on GPUs via PyTorch; compatible with consumer setups

- Custom Apps: Embed in voice assistants or analysis pipelines with Python code

- Web Demos: Hugging Face Spaces for quick online testing without installation

Best prompts optimised for Qwen-Audio

- Transcribe and translate this spoken English audio to French while preserving natural tone:

- Analyze this audio clip: describe the scene, detect emotions, identify sounds, and summarize key events

- Respond to this voice instruction as a helpful assistant: [spoken query about weather or task]

- Classify the emotion in this speech sample and explain why: angry, happy, sad, neutral

- Generate a detailed caption and sound event list for this environmental audio recording

FAQs

Newly Added Tools

About Author