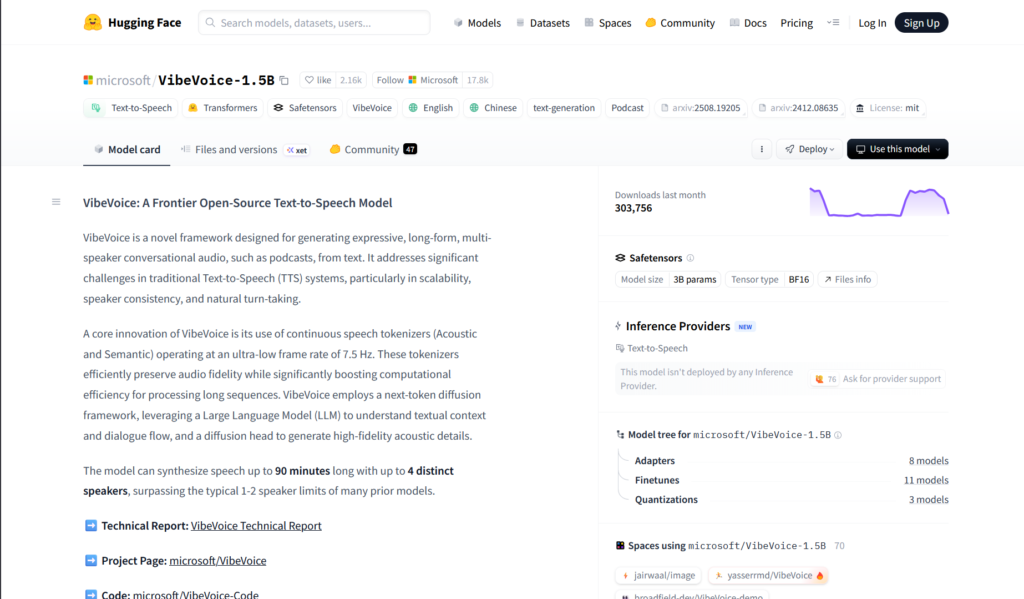

What is VibeVoice?

VibeVoice is Microsoft’s open-source TTS framework for generating expressive, long-form, multi-speaker conversational audio like podcasts, with emotions, singing, and up to 90 minutes duration.

When was VibeVoice released?

The TTS model was open-sourced in August 2025, with ASR variant added in January 2026; repo temporarily disabled due to misuse concerns.

Is VibeVoice free to use?

Yes, it is fully open-source research framework with weights on Hugging Face; no cost for download and local use (subject to responsible guidelines).

What makes VibeVoice special?

It supports 90-minute multi-speaker audio, spontaneous emotions, singing with music, cross-lingual expression, and efficient long-sequence processing via 7.5 Hz tokenizers.

Does VibeVoice support voice cloning?

It can impersonate voices with short samples for expressive synthesis, but explicitly prohibits unauthorized cloning, satire, deepfakes, or real-time conversion.

What languages does VibeVoice support?

Primarily English and Mandarin demonstrated, with cross-lingual capabilities preserving emotional expression across them.

How many speakers can VibeVoice handle?

Up to 4 distinct speakers in long-form conversations with natural turn-taking and consistency.

Where can I download VibeVoice?

Model weights are on Hugging Face (microsoft/VibeVoice-1.5B); check the GitHub page for code (may be limited due to temporary disablement).

VibeVoice

About This AI

VibeVoice is a novel open-source framework from Microsoft for generating expressive, long-form, multi-speaker conversational audio such as podcasts from text.

It supports up to 90 minutes of continuous speech with up to 4 distinct speakers, natural turn-taking, spontaneous emotions, singing, and background music integration.

Core innovation includes continuous speech tokenizers (Acoustic and Semantic) at ultra-low 7.5 Hz frame rate for efficiency in long sequences, combined with a next-token diffusion framework using an LLM for textual context/dialogue flow and a diffusion head for high-fidelity acoustics.

It excels at context-aware expression including unscripted emotional nuances, cross-lingual capabilities (English and Mandarin demonstrated), and realistic prosody.

The model family includes VibeVoice-TTS for synthesis and later VibeVoice-ASR for long-form transcription with structured outputs (speaker, timestamps, content).

Released in August 2025 with weights on Hugging Face (e.g., microsoft/VibeVoice-1.5B), it emphasizes responsible use and was temporarily disabled due to misuse concerns but advances speech synthesis research.

Applications include podcast production, multi-speaker dialogues, emotional voiceovers, singing generation, and cross-lingual audio.

As an open-source research framework, it promotes collaboration in TTS while prohibiting out-of-scope uses like unauthorized voice cloning or real-time deepfakes.

Demos showcase spontaneous arguments, singing lyrics, tech podcasts with background music, sports debates, and climate discussions, highlighting its expressive and long-form strengths.

Key Features

- Long-form multi-speaker synthesis: Generates up to 90 minutes of coherent audio with up to 4 distinct speakers and natural turn-taking

- Expressive and emotional speech: Captures spontaneous emotions, nuances, prosody, and unscripted dynamics

- Singing and music integration: Supports singing lyrics with background music in generated audio

- Cross-lingual capabilities: Demonstrated English-Mandarin translation and expression preservation

- Ultra-low frame rate tokenizers: Acoustic/Semantic tokenizers at 7.5 Hz for efficient long-sequence processing

- Next-token diffusion framework: LLM for context/dialogue + diffusion head for high-fidelity acoustics

- Context-aware generation: Understands dialogue flow, speaker roles, and emotional cues from text

- Open-source research framework: Weights and code for TTS (and ASR variant) to advance speech synthesis

Price Plans

- Free ($0): Fully open-source research framework with model weights available on Hugging Face; no usage fees

- Commercial/Enterprise (N/A): Not specified; intended for research, not production deployment without review

Pros

- Breakthrough long-form stability: Handles extended conversations far beyond typical 1-2 speaker limits

- Highly expressive output: Realistic emotions, singing, and spontaneous nuances for lifelike audio

- Efficient architecture: Low frame rate enables processing of very long sequences without collapse

- Open-source accessibility: Weights on Hugging Face for research and development use

- Multi-speaker naturalness: Strong turn-taking and speaker distinction in dialogues

- Cross-lingual potential: Preserves expression across English and Mandarin

- Responsible AI focus: Guidelines against misuse like unauthorized cloning or deepfakes

Cons

- Repo temporarily disabled: Access limited due to misuse concerns (as of late 2025)

- Requires powerful hardware: Diffusion-based model demands GPU for inference

- Setup for local use: Needs technical knowledge to run from Hugging Face weights

- Limited languages demonstrated: Primarily English/Mandarin; broader support unclear

- No real-time low-latency focus: Optimized for offline long-form rather than streaming

- Responsible use restrictions: Prohibits voice impersonation without consent or deepfake apps

- Early research stage: May have artifacts in edge cases or complex emotions

Use Cases

- Podcast production: Generate full episodes with multiple hosts, guests, emotions, and background music

- Conversational audio creation: Synthesize dialogues, debates, interviews, or storytelling with natural flow

- Expressive voiceovers: Add emotional depth to narrations, audiobooks, or character voices

- Singing and music demos: Create sung lyrics or musical segments from text

- Cross-lingual content: Produce audio translations preserving original expression

- Research in TTS: Extend or benchmark expressive multi-speaker synthesis

- Educational audio: Generate engaging lectures or discussions with varied speakers

Target Audience

- AI speech researchers: Advancing TTS with expressive, long-form capabilities

- Content creators: Podcasters, audiobook producers needing synthetic multi-speaker audio

- Developers and experimenters: Running open-source models locally for custom applications

- Multimedia artists: Incorporating emotional/singing voices in projects

- Language tech enthusiasts: Exploring cross-lingual expressive synthesis

- Microsoft ecosystem users: Interested in frontier voice AI research

How To Use

- Access repo (when available): Visit microsoft.github.io/VibeVoice or Hugging Face microsoft/VibeVoice-1.5B

- Download model weights: Get from Hugging Face for local inference

- Install dependencies: Set up environment with required libraries (PyTorch, etc.)

- Prepare input: Provide text script with speaker tags and optional emotion cues

- Run generation: Use provided inference scripts for TTS synthesis

- Listen and iterate: Generate audio samples; refine prompts for better expression

- Follow guidelines: Adhere to responsible use policy against misuse

How we rated VibeVoice

- Performance: 4.6/5

- Accuracy: 4.5/5

- Features: 4.8/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.0/5

- Customization: 4.7/5

- Data Privacy: 4.9/5

- Support: 4.2/5

- Integration: 4.4/5

- Overall Score: 4.6/5

VibeVoice integration with other tools

- Hugging Face: Model weights and inference examples hosted for easy download and community use

- GitHub Repository: Codebase (when enabled) for local setup, extensions, and contributions

- Audio Production Tools: Export generated audio (WAV/MP3) for import into DAWs like Audacity, Adobe Audition, or Reaper

- Research Frameworks: Compatible with PyTorch ecosystems for fine-tuning or integration in TTS pipelines

- Local Deployment: Runs on personal GPUs; no cloud required for core synthesis

Best prompts optimised for VibeVoice

- Generate a heated spontaneous argument between two friends about a broken promise, with rising emotion, interruptions, and natural turn-taking: Speaker1: I can't believe you did it again. Speaker2: Wait, let me explain...

- Create a podcast episode discussing the latest AI advancements with two hosts and one guest expert, including background music fades, enthusiastic tones, and laughter: Host1 welcomes Guest, discusses GPT-5 launch...

- Synthesize a singer performing 'See You Again' with emotional delivery, slight vocal cracks for realism, and soft instrumental background: [lyrics here]

- Produce a cross-lingual conversation: Speaker in Mandarin expresses frustration, then switches to English with preserved emotional tone: Ni wei shen me zhe me zuo? Why did you do this?

- Generate a 10-minute tech podcast segment on climate change impacts with three speakers debating solutions, natural pauses, agreements, and background ambient music

FAQs

Newly Added Tools

About Author